Leaked Docs Show What Phones Cellebrite Can (and Can’t) Unlock

The leaked April 2024 documents, obtained and verified by 404 Media, show Cellebrite could not unlock a large chunk of modern iPhones.

Cellebrite, the well-known mobile forensics company, was unable to unlock a sizable chunk of modern iPhones available on the market as of April 2024, according to leaked documents verified by 404 Media.

The documents, which also show what various Android handsets and operating system versions Cellebrite can access, provide granular insight into the very recent state of mobile forensic technology. Mobile forensics companies typically do not release details on what specific models their tools can or cannot penetrate, instead using vague terms in marketing materials. The documents obtained by 404 Media, which are given to customers but not published publicly, show how fluid and fast moving the success, or failure, of mobile forensic tools can be, and highlights the constant cat and mouse game between hardware and operating manufacturers like Apple and Google, and the hacking companies looking for vulnerabilities to exploit.

Analysis of the documents also comes after the FBI announced it had successfully gained access to the mobile phone used by Thomas Matthew Crooks, the suspected shooter in the attempted assassination of former President Donald Trump. The FBI has not released details on what brand of phone Crooks used, and it has not said how it was able to unlock his phone.

The documents are titled “Cellebrite iOS Support Matrix” and “Cellebrite Android Support Matrix” respectively. An anonymous source recently sent the full PDFs to 404 Media, who said they obtained them from a Cellebrite customer. GrapheneOS, a privacy and security focused Android-based operating system, previously published screenshots of the same documents online in May, but the material did not receive wider attention beyond the mobile forensics community.

For all locked iPhones able to run 17.4 or newer, the Cellebrite document says “In Research,” meaning they cannot necessarily be unlocked with Cellebrite’s tools. For previous iterations of iOS 17, stretching from 17.1 to 17.3.1, Cellebrite says it does support the iPhone XR and iPhone 11 series. Specifically, the document says Cellebrite recently added support to those models for its Supersonic BF [brute force] capability, which claims to gain access to phones quickly. But for the iPhone 12 and up running those operating systems, Cellebrite says support is “Coming soon.”

A SECTION OF THE IOS DOCUMENT. IMAGE: 404 MEDIA.

The iPhone 11 was released in 2019. The iPhone 12 was launched the following year. In other words, Cellebrite was only able to unlock iPhones running the penultimate version of iOS that were released nearly five years ago.

The most recent version of iOS in April 2024 was 17.4.1, which was released in March 2024. Apple then released 17.5.1 in May. According to Apple’s own publicly released data from June, the vast majority of iPhone users have upgraded to iOS 17, with the operating system being installed on 77 percent of all iPhones, and 87 percent of iPhones introduced in the last four years. The data does not break what percentage of those users are on each iteration of iOS 17, though.

Cellebrite offers a variety of mobile forensics tools. That includes the UFED, a hardware device that can extract data from a physically connected mobile phone. The UFED is a common sight in police departments across the country and world, and is sometimes used outside of law enforcement too. Cellebrite also sells Cellebrite Premium, a service that either gives the client’s UFED more capabilities, is handled in Cellebrite’s own cloud, or comes as an “offline turnkey solution,” according to a video on Cellebrite’s website.

That video says that Cellebrite Premium is capable of obtaining the passcode for “nearly all of today’s mobile devices, including the latest iOS and Android versions.”

That claim does not appear to be reflected in the leaked documents, which show that, as of April, Cellebrite could not access from locked iOS phones running 17.4.

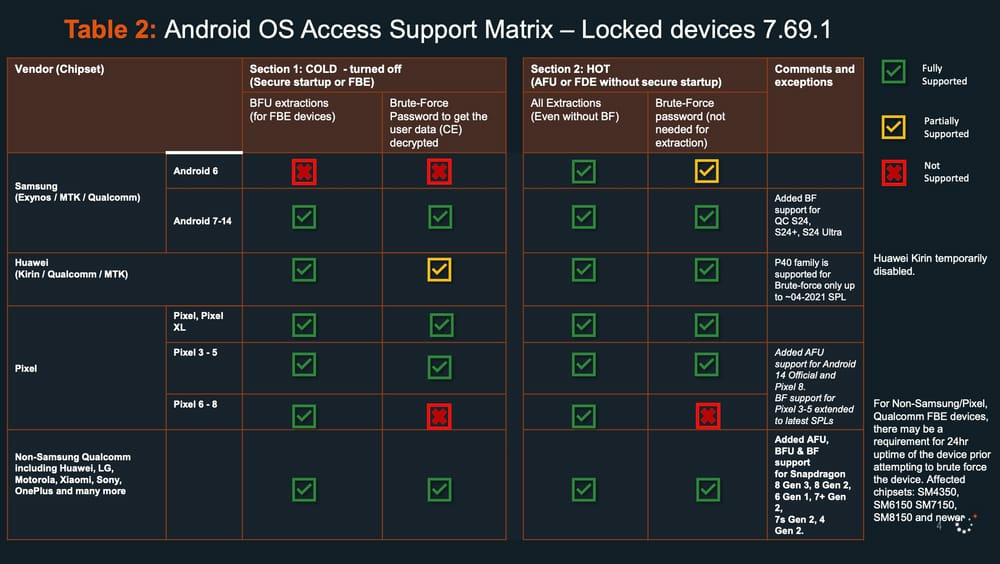

The second document shows that Cellebrite does not have blanket coverage of locked Android devices either, although it covers most of those listed. Cellebrite cannot, for example, brute force a Google Pixel 6, 7, or 8 that has been turned off to get the users’ data, according to the document. The most recent version of Android at the time of the Cellebrite documents was Android 14, released October 2023. The Pixel 6 was released in 2021.

A SECTION OF THE ANDROID DOCUMENT. IMAGE: 404 MEDIA.

Cellebrite confirmed the authenticity of the documents in an emailed statement to 404 Media. “Similar to any other software company, the documents are designed to help our customers understand Cellebrite’s technology capabilities as they conduct ethical, legally sanctioned investigations—bound by the confines of a search warrant or an owner’s consent to search. The reason we do not openly advertise our updates is so that bad actors are not privy to information that could further their criminal activity,” Victor Ryan Cooper, senior director of corporate communications and content at Cellebrite, wrote.

“Cellebrite does not sell to countries sanctioned by the U.S., EU, UK or Israeli governments or those on the Financial Action Task Force (FATF) blacklist. We only work with and pursue customers who we believe will act lawfully and not in a manner incompatible with privacy rights or human rights,” the email added. In 2021 Al Jazeera and Haaretz reported that a paramilitary force in Bangladesh was trained to use Cellebrite’s technology.

Cellebrite is not the only mobile forensics company targeting iOS devices. Grayshift makes a product called the GrayKey, which originally was focused on iOS devices before expanding to Android phones too. It is not clear what the GrayKey’s current capabilities are. Magnet Forensics, which merged with Grayshift in 2023, did not immediately respond to a request for comment.

Cellebrite’s Android-focused document also explicitly mentions GrapheneOS in two tables. As well as being an operating system that the privacy-conscious might use, 404 Media has spoken to multiple people in the underground industry selling secure phones to drug traffickers who said some of their clients have moved to using GrapheneOS in recent years.

Daniel Micay, founder of GrapheneOS, told 404 Media that GrapheneOS joined a Discord server whose members include law enforcement officials and which is dedicated to discussions around mobile forensics. “We joined and they approved us, with our official GrapheneOS account, but it seems some cops got really mad and got a mod to ban us even though we didn’t post anything off topic or do anything bad,” Micay said.

There is intense secrecy around the community of mobile forensics experts that discuss the latest unlocking tricks and shortcomings with their peers. In 2018 at Motherboard, I reported that law enforcement officials were trying to hide their emails about phone unlocking tools. At the time, I was receiving leaks of emails and documents from inside mobile forensics groups. In an attempt to obtain more information, I sent public records requests for more emails.

“Just a heads up, my department received two public records request[s] from a Joseph Cox at Motherboard.com requesting 2 years of my emails,” a law enforcement official wrote in one email to other members. I learned of this through a subsequent leak of that email. (404 Media continues to receive leaks, including a recent set of screenshots from a mobile forensics Discord group).

Google did not respond to a request for comment. Apple declined to comment.

Oof, that definitely doesn’t look good from a security standpoint.

That’s not surprising

We need a deep dive

Exploiting security vulnerabilities is always a game of cat-and-mouse.

It’s best to set your phone to automatically update to the newest software available to keep it secure.

This assumes the official upstream is not compromised. Apple absolutely will push new vulnerabilities in updates when requested so by higher level law enforcement and/or the NSA etc. The FBI is also known to have placed moles in app vendor teams.

I’ve read about the NSA exploiting third-party push notification systems from Snowden. I’ve only read the opposite of your claim about Apple providing a backdoor for the government. Do you have a source so I can read more about it?

Apple is a US based company. They are legally required to comply and not openly speak about it when a court asks them to do so.

Same for Google obviously, but since their software on end-devices is much more diverse, it is much harder to do.

Apple’s legal policy is very clear. They do not have access to data on customer devices, and therefore cannot provide said data to law enforcement or government officials.

They’ve fought the backdoor request in court 11 times and won. They are not mandated to comply by law if they themselves do not have access.

They can and will provide iCloud data in response to a warrant.

https://www.apple.com/legal/privacy/law-enforcement-guidelines-us.pdf

This has nothing to do with what I wrote.

You claimed that Apple intentionally places vulnerabilities in updates to allow governmental access, right?

They’ve repeatedly claimed in court that they do not have access to on-device data or transmissions, as stated in their legal privacy policy. If they created a vulnerability, then they could exploit it, making the above claims both perjury and breach of contract.

Please clarify if I misunderstood your comment.

don’t bother with apple fanboys. apple can’t be wrong.

This is an irrelevant and stupid comment. If Apple is required by a gag order not to disclose an exploit, their legal policy would not violate the gag order.

You think if they did build an exploit after a demand they would write about it in their legal notices? That’s just dumb.

Its not a matter of “have they done it” its just that the US feds can legally force any corporation to do as they please under threat of violence while binding them with gag orders.

Even if there is no proof so far, there is no reason to believe that they havent done so, because they legally can.

Its also specifically disallowed to deploy any kind of system that automatically notifies the public in case law enforcement compromises a companies servers as part of an investigation. Apple used to have such a warrant canary but they removed it some years ago.

See Warrant Canary

They do not have access to data on customer devices. Therefore, they cannot be compelled to provide it. Apple repeatedly challenges and wins cases against the government’s request for a backdoor to their devices.

They can and will provide iCloud data in response to a warrant.

Its a closed source operating system dude, they can do whatever they want. Just because there are lawsuits, doesnt mean they dont have a backdoor to your phone.

They can literally force apple to pretend that they didnt give them a backdoor. There are basically no limits to law enforcement powers as long as they say the word “terorrism”.

I’m not for trusting corporations either, but you don’t have a source, only theories.

Snowden is pretty much the authority on NSA vulnerabilities, and he hasn’t released any proof that Apple has a backdoor on their devices. The only thing he’s demonstrated is how the NSA has used MITM on third-party push notifications, which Apple has since encrypted and relayed to obfuscate.

If you have a source, I’m down to read it. Otherwise, you’re just speculating.

The conversation below this message goes really off the rails.

If anyone wants to look into the actual legal theory of compelling businesses to do things, look for a national security letters.

And yes, the legal framework exists, to compel businesses to do something for national security purposes, and not disclose that they’ve done that.

I just want to remind everybody fighting below, security is about capabilities, not intentions. It doesn’t matter if a company wouldn’t do something if they had a choice, just that they can do it. That’s how you model your threats by what people can do not what you think they will do

Not Apple exclusive though

So in short newer Pixel and iPhone models seem to be the most resilient to these attacks, with every iPhone able to run iOS 17.4 (XS/XR or newer) currently not attackable.

But obviously an attacker in possession of the device can wait for an exploit to be found on whatever OS version the device is running.

The by far best protection then is to set a strong passphrase so even if/when your device/OS have known vulnerabilities to allow brute force attacks, the passphrase is too complex to be brute forced in a realistic amount of time.

Are we looking at the same chart? It says they can do BFU extraction on all Pixel phones.

I was talking about the “Brute-Force Password to get the user data (CE) decrypted” column, which is probably the more interesting part.

Can we create a situation where brute force is unfeasible while using 6 digits PIN? That would be nice to have security and convenience at the sametime. Using passphrase isn’t that feasible for me as my Samsung phone randomly prompts for PIN when I need the most.

Can we create a situation where brute force is unfeasible while using 6 digits PIN?

According to this comment from GrapheneOS, the latest Pixels and iPhones are not brute forceable with a 6+ digit PIN:

Pixel 6 and later or the latest iPhones are the only devices where a random 6 digit PIN can’t be brute forced in practice due to the secure element.

Not really. A 6-digit PIN gives you ~20 bits of entropy so it’ll be cracked in no time. The only protection you’re relying on is the hardware and the OS, and according to the Cellebrite compatibility table it’s mostly a question of when a vulnerability is found, not if.

So it’s a trade-off between security and usability.

I use a ten-digit alphanumeric passcode. I rarely have to type it with Face ID.

If you live in the USA, the cops can now legally force you to unlock your phone using biometrics. And I mean I guess they can also illegally (and whose gonna stop them) force you to open your phone with a passcode or pattern as well. But it’s a lot easier for them to hold your finger on a scanner or your phone to your face than make you type in the code.

Absolutely. If you have an iPhone, press the side button 5 times to disable Face ID or Touch ID. We should all be aware of how to disable biometrics on our devices.

Be careful doing that. It could be considered tampering of evidence

a trap at every turn.

You have anything to back that up or just speculation?

I have a routine that unless my phone is connected to my wifi, it turns off biometrics and only uses pattern.

And I mean I guess they can also illegally (and whose gonna stop them) force you to open your phone with a passcode or pattern as well.

You would stop them. By not giving it to them.

Of course there’s always torture, which cops have been known to do, but is probably “unlikely”.

Couldn’t you just add time to the equation? Just make the hash really hard

6 digits is still kind of weak but you could bump it up but not so much that it takes forever to enter

Simply making the hash really hard is not a good option. All most people will notice is that their underpowered phone suddenly takes way longer to unlock compared to before. Cracking the hash on very powerful hardware is then ‘trivial’

As the other comment mentioned, a hardware solution seems to be the only one.

Hardware solutions involve a lot of trust and assumptions. All it takes is a little hardware flaw or simple bus injection and you are toast.

You could offload cryptography to the dedicated hardware but that is already pretty common

newer Pixel and iPhone models seem to be the most resilient to these attacks

Pretty much exactly what one would expect, honestly. Especially with so many Android OEMs lacking critical security updates.

Not phone specific, but signal had a great blog post on cellebrite a while back.

By a truly unbelievable coincidence, I was recently out for a walk when I saw a small package fall off a truck ahead of me. … Inside, we found the latest versions of the Cellebrite software, a hardware dongle designed to prevent piracy (tells you something about their customers I guess!), and a bizarrely large number of cable adapters.

I guess it makes sense signal works with the mafia

My Pixel 8 with GrapheneOS , password of 35 digits and USB completely off sends its regards

Tbh it depends on who’s trying to break in and what their interest is in you. If it’s law enforcement, unless you’re of unique national significance (eg you assassinated the national leader, you whistleblew on some really sensitive documents, you did a huge terror attack), when law enforcement seize someone’s electronics and they can’t get in the usual ways they normally just give up. If you are uniquely wanted by the government they may try to torture the password out of you. But most likely they’ll decide it’s not worth the effort if they can’t break into it with their standard methods. As for non-state actors, yeah the hitting over the head with a wrench method is more likely, although I can’t think of why a non-governmental body would want to read the contents of your laptop so badly.

deleted by creator

Yes

deleted by creator

a good approach, indeed lol

How to remember 35 digits?

If you use dicewords it’s honestly pretty easy to remember. My master password for bitwarden is over 50 characters and it was a breeze to remember.

I’m even thinking about changing my password, but I’m not sure if dicewords have higher entropy and are more resistant to brute force attack than a password of “random” letters and numbers. What do you think about?

There is a very relevant xkcd for this exact question.

Tldr dicewords are the better option. You can still add numbers, symbols, and capitalize if you really want even more entropy.

dicewords are usually better because they tend to be longer, but if it’s the same length as a strong diceware password, it’d be stronger just because there’s a wider range of characters each character in the password could be (with a passphrase it’d be alphanumeric + spaces)

I mixed some shorter passwords that I’m used to use and added some random symbols

Is that a 35-digit PIN or a 35-character password?

characters

Wat

The main takeaway from this, is not that these phones are absolutely secure now and forever, it’s just that the newer phones are secure… For now.

Most security just buys you time. Might be months, might be years. But it just buys you time.

This is demonstrated that basically any phone older than 2 years is completely breakable. It’s an arms race, and if you own a phone you become a static target.

So if data is absolutely critical, it shouldn’t rely on a low entropy password dependent on the secure element of a phone. That could be fine for things that are ephemeral, things you can change from the cloud, but not say the KFC secret recipe

That’s simply not true. The chart clearly states that Cellebrite cannot break encryption on any iPhone that can run the current version of iOS. That includes any iPhone made in the last six years.

What this illustrates is the importance keeping your phone’s software updated for maximum security. New hardware is not necessary.

Scenario: You have the super secret KFC recipe on your phone. I steal your phone and wait until the cracking tools have caught up to the version that was on your phone. All I have to do is keep it in storage. While your phone is in storage its not getting updates.

By keeping your phone updated you have EXTENDED the time phone security can keep an attacker out of your phone, but in time they will be able to get into it.

I see your point. The attacker would need to be far more savvy than police.

They’d need to keep it charged to prevent powering off and activating the connection access denial of the Secure Enclave, while keeping the iPhone in a Mylar bag to prevent any nearby iOS devices from relaying the Find My remote erase request.

With enough time, a vulnerability may be found for that version of iOS.

Probably not powered on for years. The battery would die at some point. But yes the rest of your statement I agree with, there are protocols for cold case phones, they’re just stored waiting for the cracking to catch up

Cellebrite uses Lightning/USB-C port access to bypass passcode security. Once iPhone is powered off, the Secure Enclave will deny port access until the passcode is entered. It’s not impossible, but it’ll certainly take far longer than if they keep the iPhone charging while they wait for an exploit.

Do they remove/disconnect the battery in this mode? If not wont the battery eventually balloon?

It’s fine if it happens. Balooning is just a chemical breakdown of the battery. It won’t explode. Disconnecting the battery while keeping the device connected to power runs a high risk of shorting, potentially causing component damage.

Simply not charging to 100% constantly will prevent this.

I’ve had phones on a charger for years, using a simple timer, so it never gets to 100%. Pretty easy to do.

That’s really interesting. For example it says GOS phones can’t be accessed past 2022. But if you had confiscated a GOS phone in, say, 2020, and just waited for that particular vulnerability to rear it’s head, you could access it and all of its’ contents.

100%

Just like door locks and safes, they just buy time. Hopefully whatever data was seized in 2020 isn’t going to be relevant in 2024

Chances are they don’t need to just randomly guess keys. They can use stored data and known data such as birthdays and passwords to online services

But apple can

Like he said, it buys you months or YEARS

deleted by creator

I know this may be pedantic, but

it’s just that the newer phones are secure… For now.

that statement suggests that other recovery techniques (e.g. hardware decaping, state zero-days, etc), dont already make absolutely current devices insecure.

it would not surprise me that a TLA with physical access could not recover enclave information and completely expose naked storage in 2 days or less - its just a matter of how urgent is. a former president nearly loosing their head might count as pretty damn urgent. meddle with the us power structure and it will protect itself - if not the particular individuals.

if you noticed any power dips in your area right after the attempt on trump, that was probably the local NSA cluster firing up.

In the context of what we know is commercially available, the new phones are reasonably secure, for now

If whatever your doing is worth decapping the chip and scanning the underlying electron state, then I’d be more worried about your kneecaps then your phone.

dont already make absolutely current devices insecure.

We don’t have any evidence for this statement, and we can never prove the negative (that a device is absolutely secure).

Depends on your threat model of course, but given the data we have available, the advice still stands, use a modern phone directly from google/apple and keep it updated.

Right, there is no lazy replacement for good opsec. If you are facing a state actor then you need to assume your device storage can be accessed.

We don’t have any evidence for this statement, and we can never prove the negative (that a device is absolutely secure).

does anyone have info on crooks’s phone make/model (and an OS version guess)? I have not seen this anywhere and, if it is not already in the public domain, why?

FBI says they got the phone contents in the clear. that gives some boundsfor both known (easy) potential compromises and “oh, fuck.”

edit: typo

edit 2: still no detail but I found this…

The phone was a relatively new model, which can be harder for law enforcement to access than old phones because of newer software, according to technology experts. In many federal investigations, it can take hours, weeks or months to open a suspect’s phone.

https://www.washingtonpost.com/nation/2024/07/16/thomas-crooks-phone-motive-parents-trump-shooting/

unless this information is now a state secret, we may learn more.

couldnt read the whole article, but I would say, this is an “oh, fuck.” moment for a lot of people

The FBI tries and cannot unlock a recent phone. they allegedly turn to a private company for emergency access and they are in within hours(?)

I think many people should reevaluate their threat model and mitigations after this. perhaps this changes nothing for you. perhaps it changes everything for you.

The better answer is to just not rely on hardware security. I wish Android had full disk encryption.

It did, but was replaced with FBE in version 6 i believe.

Of course it was

FBE is still encryption. Still hardware-backed.

It would be nice if it would focus on Android and AOSP from a publication perspective.

However, most devices are going to be highly vulnerable as they don’t have boot loader relock. Also Androids encryption is weak and not well implemented.

Actually worse than I thought.

So basically the USB is the point of entry? When you could permanently fuse the data connection in the SoC it would be a huge improvement in security. OFC you could only use the port for charging anymore.

Yes. The entire risk surface increases every time you add a new protocol. Bluetooth, NFC, charging, it’s a real problem because features keep increasing.

There are some crazy vendors who will remove a lot of that from your phone, but it’s pretty rare.

Until you want to reflash the device

Obviously the USB port will not be usable after you blow the fuses in the SoC.

GOS is spooked time to switch to carrier pigeons and live under a bridge with a dolphin.

Archived link (no requirement to sign up): https://archive.is/1bQJG

So it seems most of this revolves around brute forcing. So using a properly long and secure password should make it nearly impossible for them right?

Kinda. If the phone is off completely, then having a very long key that is cryptographically sound would be sufficient. Unless, there was an exploit in the secure element itself. Even if your user key is very secure, most phones use a secure element taking the user key going to a super secure cryptographic key. So if that lookup can get exploited in the hardware, game over

Figured it had to be something like that seeing as changing your password doesn’t involve a long reencryption process

lol typical isfake company

Removed by mod